When did quantum computing start? This question, while seemingly simple, unveils a complex tapestry woven from theoretical explorations and practical advancements. Quantum computing, a field promising revolutionary solutions to complex problems, has a rich history rooted in theoretical physics. Many people are intrigued by the potential of quantum computing, but aren’t sure about where it all began. This article dives deep into the timeline, exploring the key figures and milestones that defined the evolution of this groundbreaking field. We will uncover the true start of quantum computing and understand why this technology is so important today. We’ll also look at the future of quantum computing and the many opportunities that await.

Early Conceptualizations: The Foundation of Quantum Computing

Pioneering Concepts in Quantum Mechanics

The roots of quantum computing lie deep within the world of quantum mechanics. Scientists like Niels Bohr and Werner Heisenberg laid the foundation with their groundbreaking work in the early 20th century. Their discoveries fundamentally changed the way we understood the physical world at the atomic and subatomic levels. They recognized the possibility of harnessing the unique properties of quantum systems to solve problems beyond the reach of classical computers. This period, though not directly focused on quantum computation, established the theoretical framework that would eventually pave the way for the field. The groundwork was laid but the actual concept of using quantum phenomena to perform calculations was still years away. These early conceptualizations are essential to appreciating the subsequent leaps in the field.

The Mid-20th Century: Laying the Groundwork

The Rise of Quantum Algorithms

The mid-20th century brought the development of key concepts that later became fundamental to quantum computing. Researchers started exploring the possibility of using quantum phenomena to perform calculations, which was considered a theoretical approach initially. While not yet a practical reality, these ideas spurred further theoretical research, creating a solid foundation for future developments. The theoretical groundwork was laid, but the practical application was still quite far off.

The 1980s: A Turning Point

The Birth of Quantum Algorithms and the Deutsch Algorithm

The 1980s marked a turning point in the development of quantum computing, with the publication of landmark papers that truly initiated the field. Richard Feynman’s work highlighted the need for quantum computers to simulate complex quantum systems, while David Deutsch proposed the revolutionary Deutsch algorithm, an early example of a quantum algorithm. This marked a shift from pure theory into the more practical application realm. These were pivotal moments for the future of quantum computing. The Deutsch algorithm was a breakthrough, demonstrating the computational advantage quantum systems could offer over classical computers.

The 1990s and Beyond: From Theory to Experimentation

Shor’s Algorithm and Practical Implementation

The 1990s saw significant progress in the field of quantum computation, particularly with Peter Shor’s discovery of the Shor’s algorithm in 1994. Shor’s algorithm provided a demonstration of quantum computation’s potential to solve problems intractable for classical computers, focusing on factoring large numbers—a critical breakthrough that further fueled interest in quantum computing. As the 1990s transitioned into the 2000s, the experimental side of quantum computing took shape, with scientists attempting to build the necessary hardware. Researchers started to focus on creating basic quantum systems to test the principles and algorithms proposed in earlier research.

Quantum Computing in the 21st Century: Current State and Future Directions

Experimental Advancements and Applications

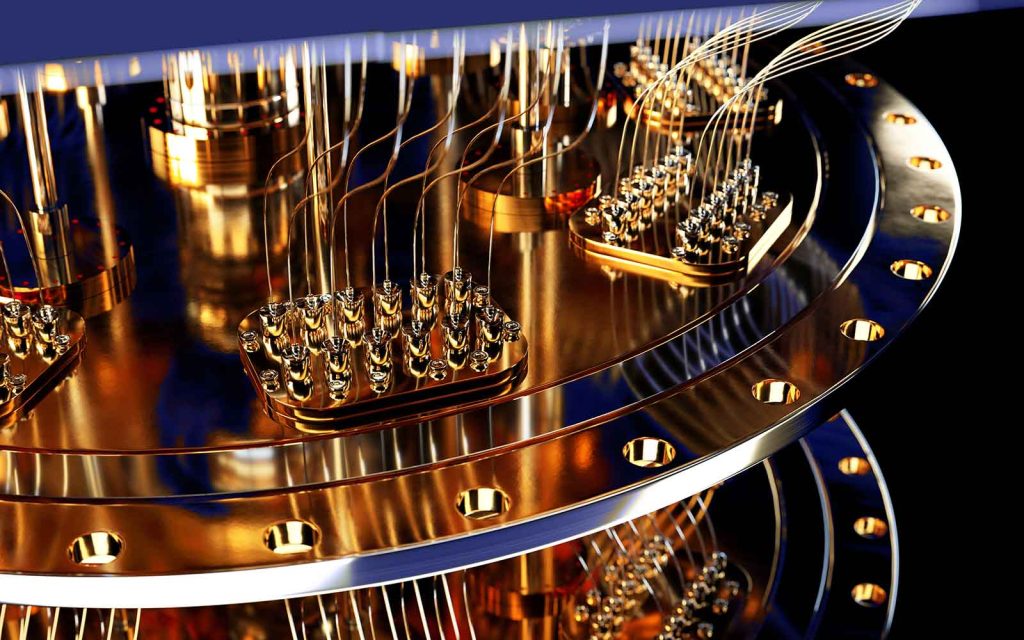

The 21st century has seen significant advancements in quantum computing hardware and software. While practical, large-scale quantum computers are still in the developmental phase, ongoing research focuses on enhancing qubits, controlling quantum systems, and creating more sophisticated algorithms. Current applications are limited by the availability of sufficient qubit counts. Despite this, the potential of quantum computing is already inspiring major technological advancement and research, encompassing areas from medicine to cryptography. Expect continued growth in the field of quantum computing with each new innovation and new breakthrough.

What are the potential applications of quantum computing?

Quantum computing has the potential to revolutionize various fields, including drug discovery, materials science, cryptography, and optimization problems. By simulating molecular interactions, researchers can gain insights into creating new drugs and materials. Quantum algorithms can also lead to breakthroughs in cryptography by addressing the limitations of current methods. In summary, the diverse applications of quantum computing underscore its remarkable potential across scientific and industrial sectors.

How long will it take for quantum computers to become widely used?

The timeline for widespread adoption of quantum computers is uncertain. Developing reliable and scalable quantum hardware remains a significant challenge, with ongoing research focused on creating more stable and error-resistant qubits. This phase requires further improvements in qubit connectivity and computational error reduction, leading to more complex quantum algorithms and systems. While the full realization of quantum computing in every aspect will take time, the ongoing evolution makes progress evident.

What is the most important aspect of quantum computing?

The most important aspect of quantum computing is its potential to solve problems intractable for classical computers. The principles of superposition and entanglement allow quantum computers to explore multiple possibilities simultaneously, leading to unprecedented computational speeds for certain complex tasks. It offers a unique approach that directly addresses the limits of classical computing.

Frequently Asked Questions

What is the difference between classical and quantum computing?

Classical computers rely on bits, which can represent either 0 or 1. Quantum computers use qubits, which can represent both 0 and 1 simultaneously due to the principles of superposition. This allows for exploring multiple possibilities simultaneously, leading to potential computational advantages. The fundamental difference lies in the use of quantum phenomena to perform calculations, which vastly expands the potential scope of computation. Quantum computers are built on the foundations of quantum mechanics.

In conclusion, the journey of quantum computing began with theoretical groundwork and has evolved into a field brimming with potential applications. While the exact starting point is debatable, the crucial breakthroughs and milestones throughout the decades paved the way for today’s quantum endeavors. To stay updated and gain a deeper understanding, explore resources like academic journals and quantum computing blogs. By understanding the history and evolution, you can better appreciate the ongoing advancements in this rapidly developing field and the countless opportunities it presents. Explore the fascinating future of quantum computing and its possible solutions to modern-day challenges!